Ad-Hoc Testing Done Right: Adding Value Without Chaos

The Tester Who Broke the Build by Not Following the Plan

The release was scheduled for Friday. Every test case had passed. Every charter had been executed. The coverage map was green across the board. The team was confident.

Then, on Thursday afternoon, a developer named Marcus wandered over to the QA lead’s desk. “Hey, weird question — has anyone tried using the app while switching between WiFi and cellular? I was on the train this morning and the whole thing just… froze.” He hadn’t been assigned to test this. There was no charter for it. No test case covered it. He’d simply been using the application as a regular person and stumbled into a critical failure.

The QA lead grabbed the app and spent fifteen minutes doing exactly what Marcus described — toggling network connections, letting the signal drop and reconnect, switching between networks mid-transaction. Within those fifteen minutes, she’d found a data corruption bug that would have affected every mobile user with an unstable connection. No session report. No charter. No formal structure at all. Just a hunch, a quick investigation, and a release-saving discovery.

This is ad-hoc testing at its best. Unplanned, unscripted, driven by instinct and opportunity — and devastatingly effective.

It’s also the kind of testing that gets a terrible reputation, because for every story like Marcus’s, there are a hundred stories of testers “doing ad-hoc testing” as a euphemism for aimless clicking with nothing to show for it. The difference between the two isn’t whether you have a plan. It’s whether you have a purpose.

We’ve spent the last two articles building frameworks for structured exploratory testing — Session-Based Test Management for organizing sessions, and charter writing for focusing each mission. Now we need to talk about what happens when you deliberately set all of that aside. Because the most complete testing strategy includes room for the unplanned, the instinctive, and the spontaneous — as long as you know how to make it count.

What Ad-Hoc Testing Actually Means

The term “ad-hoc” comes from Latin, meaning “for this” — for this particular purpose, for this specific situation. It doesn’t mean random, careless, or undisciplined. It means responsive. Situational. Arising from the moment rather than from a plan written days or weeks earlier.

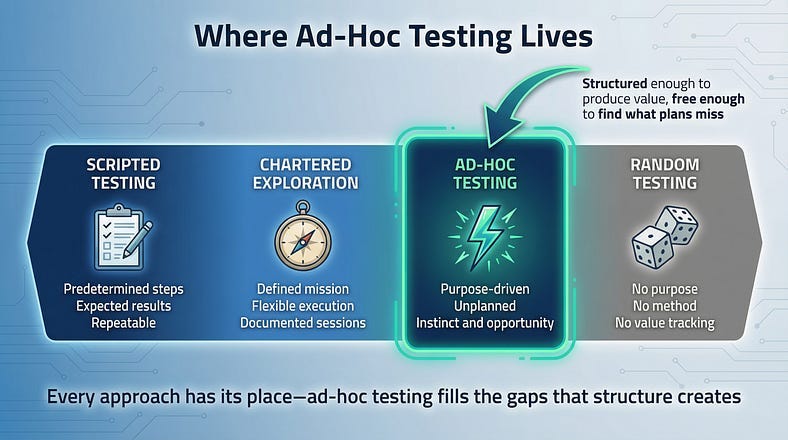

Ad-hoc testing is testing performed without predefined test cases, charters, or formal session structures. The tester decides what to test, how to test it, and when to stop based on their judgment, knowledge, and observations in real time. There’s no documentation requirement before the session, no formal time-box, and no predetermined scope.

This definition immediately raises a question that anyone who read our previous articles should be asking: how is this different from the unfocused exploration that SBTM was designed to fix? It’s a fair question, and the answer lies in intent and context.

Unfocused exploration is someone sitting down without a plan because they don’t have one. Ad-hoc testing is someone setting aside their plan because they have a reason to. The first is a gap in process. The second is a deliberate testing technique deployed in situations where formal structure would actually slow you down or blind you to important discoveries.

The distinction is subtle but essential. A tester doing ad-hoc testing well brings the same skills, instincts, and domain knowledge they’d bring to a chartered session. They just aren’t constraining themselves to a predefined mission — because the situation calls for something more fluid.

When Ad-Hoc Testing Is the Right Choice

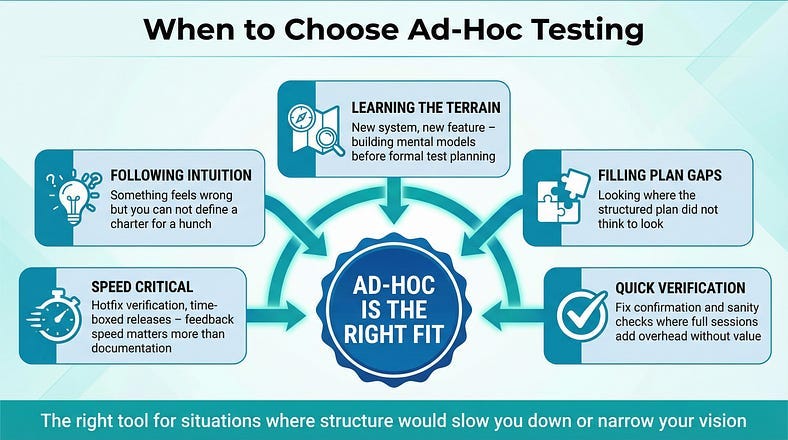

Ad-hoc testing isn’t a fallback when you don’t have time for “real” testing. It’s a first-choice approach in specific situations where its strengths matter more than formal structure.

When You Need Speed Over Documentation

A critical hotfix just landed in staging. The release is in two hours. You don’t have time to write charters, set up sessions, and conduct formal debriefs. You need a skilled tester to spend thirty minutes hammering the fix and its surrounding functionality, find anything broken, and report back. The value is in the speed of feedback, not the formality of the process.

When Something Feels Wrong

Experienced testers develop a sense — call it intuition, pattern recognition, or professional paranoia — that something isn’t right. Maybe the page loaded slightly slower than usual. Maybe a dropdown had an option you don’t remember seeing before. Maybe the error message used different phrasing than elsewhere in the app. These hunches don’t fit neatly into a charter because they’re not hypotheses yet. They’re whispers. Ad-hoc testing is how you follow those whispers to see if they lead somewhere.

When You’re Learning a New System

Before you can write effective charters, you need to understand the terrain. Ad-hoc testing is invaluable during initial exploration of an unfamiliar application. You’re not looking for specific bugs — you’re building a mental model of how the system works, where its boundaries are, and what its personality is. This reconnaissance phase informs all the structured testing that follows.

When the Formal Plan Has Blind Spots

Every test plan, no matter how thorough, has gaps. The charters you wrote cover what you anticipated. Ad-hoc testing covers what you didn’t. It’s the testing equivalent of looking in the places you weren’t planning to look — and it regularly finds issues precisely because those areas received less attention from structured approaches.

When You’re Verifying a Fix

A developer says a bug is fixed. You need to confirm the fix works and do a quick sanity check of related functionality. Spinning up a full SBTM session for a five-minute verification would be overhead without value. Ad-hoc testing fits perfectly here — quick, focused, and proportional to the task.

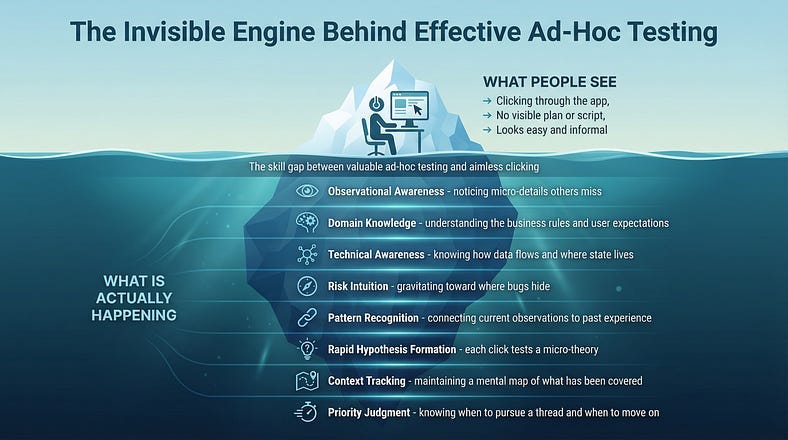

What Separates Good Ad-Hoc Testing from Aimless Clicking

Here’s the uncomfortable truth: most ad-hoc testing is bad. Not because the concept is flawed, but because testers use “ad-hoc” as permission to stop thinking carefully about what they’re doing. The label becomes an excuse rather than a technique.

Good ad-hoc testing and aimless clicking look identical from the outside — someone interacting with software without following a script. The difference is entirely internal: what’s happening in the tester’s mind.

A tester doing ad-hoc testing well is constantly making micro-decisions. I’m going to try submitting this form with the required fields empty because the validation on the previous page was inconsistent. That’s not random — it’s a hypothesis formed from an observation, tested through a deliberate action, and evaluated against expected behavior. They might not write any of this down in the moment, but they’re executing a rapid cycle of observe, hypothesize, test, evaluate. Over and over, with each action informed by the last.

A tester clicking aimlessly is doing something fundamentally different. I’ll click this. Now I’ll click that. This seems fine. Let me go over here. There’s no thread connecting the actions. No observations driving the next step. No mental model being built or challenged. It’s activity without cognition.

Three qualities distinguish effective ad-hoc testing:

Observational awareness. Good ad-hoc testers notice things. Not just “the button worked” but “the button took 400 milliseconds longer to respond than the identical button on the previous page.” They’re actively monitoring the application’s behavior, not just checking whether it crashes.

Responsive direction. Each action informs the next. If the tester notices that input validation seems lenient on one field, they immediately start probing other fields with the same approach. If a workflow handles errors gracefully in one scenario, they try to find a scenario where it doesn’t. The investigation evolves in real time based on what the system reveals.

Internal narration. Even without formal documentation, good ad-hoc testers maintain an internal narrative: I’m exploring this because I noticed that. This result suggests I should try that next. This area seems solid; I’ll move on to something I’m less confident about. This narration is what makes the testing purposeful rather than random.

The Skills That Make Ad-Hoc Testing Work

Ad-hoc testing is often treated as the easiest form of testing — just click around and see what happens. In reality, it’s one of the most demanding. Without a charter to guide your attention or a script to tell you what’s next, you’re relying entirely on your own skills to produce value. Those skills need to be strong.

Domain Knowledge

You can’t follow your instincts about a system you don’t understand. The tester who found the network-switching bug in our opening story didn’t stumble onto it by luck — she knew enough about the application’s architecture to immediately understand why toggling networks would be dangerous. Domain knowledge turns random observations into testable hypotheses.

Technical Awareness

Understanding what’s happening beneath the interface — how data flows, where state is stored, which components talk to each other — lets you make smarter choices about what to probe. A tester who knows the application uses client-side caching will instinctively test scenarios where cached data might become stale. A tester without that knowledge won’t know to look.

Risk Intuition

Experienced testers develop a sense for where bugs hide. Complex integrations, recently changed code, features built under deadline pressure, areas where requirements were ambiguous — these are the places ad-hoc testing should gravitate toward. This risk sense isn’t magic; it’s pattern recognition built from years of finding bugs and understanding why they occurred.

Rapid Context Switching

Ad-hoc testing often requires following threads wherever they lead. You might start exploring the search function, notice something odd about pagination, follow that thread to discover a data loading issue, and then trace that back to an API response format problem. The ability to follow these investigative chains without losing track of where you’ve been — and to know when to follow a thread versus when to return to your original focus — is a skill that takes practice to develop.

Giving Ad-Hoc Testing Just Enough Structure

The paradox of ad-hoc testing done right is that it benefits from a small amount of structure — not enough to turn it into something else, but enough to ensure it produces value.

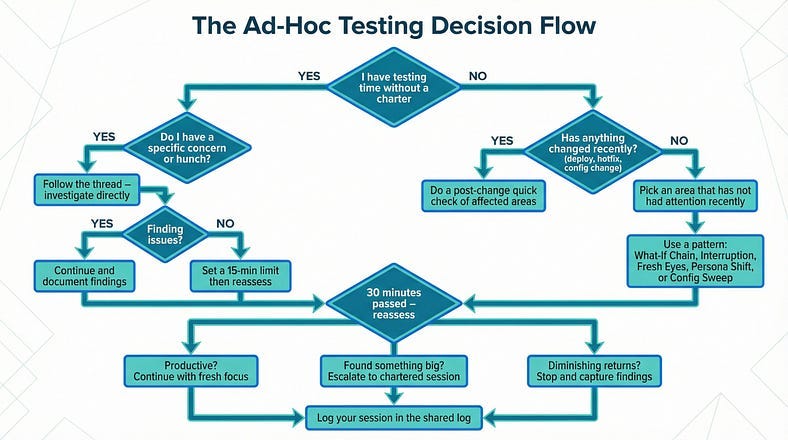

Set a Purpose, Not a Plan

Before starting, answer one question: Why am I doing ad-hoc testing right now? The answer might be “because a hotfix just dropped and I need to verify it,” or “because I have a nagging feeling about the profile section,” or “because we haven’t looked at this area with fresh eyes in weeks.” That purpose isn’t a charter — it’s a reason. It gives your session a seed of direction without constraining your exploration.

Time-Awareness Without Time-Boxing

You don’t need a formal 90-minute session boundary, but you should be aware of time. Set a soft checkpoint — “I’ll reassess after 30 minutes whether I’m finding anything worth continuing.” This prevents the two biggest ad-hoc testing failure modes: stopping too soon before you’ve found anything meaningful, and rabbit-holing for three hours on something that stopped being productive after twenty minutes.

Capture the Highlights

You don’t need session notes in the SBTM sense, but you should capture your key findings. This can be as simple as a quick message to the team channel: “Spent 20 minutes doing ad-hoc testing on the profile section. Found that changing your email while a password reset is pending locks you out of both flows. Filed as BUG-2847.” That single sentence transforms invisible testing into visible value.

The threshold for documentation should be low: if you found something worth knowing, capture it somewhere your team will see it. If you found nothing notable, a quick mental note of “I looked at X and it seemed solid” is sufficient.

Know When to Escalate to Structure

Sometimes ad-hoc testing reveals that an area needs much more attention than a quick exploration can provide. This is the moment to shift gears: “I started doing ad-hoc testing on the notifications feature and found three issues in ten minutes. This area needs dedicated charter-based sessions.” Recognizing when ad-hoc has served its purpose and formal exploration should take over is a sign of testing maturity.

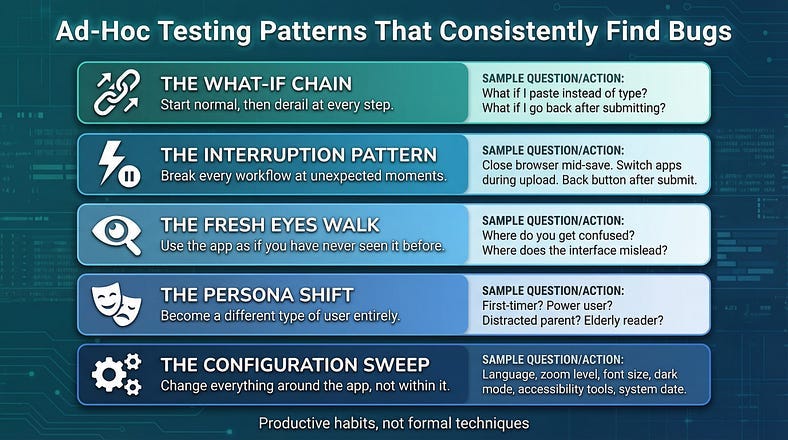

Ad-Hoc Testing in Practice: Patterns That Work

Over time, experienced testers develop recurring ad-hoc testing patterns — approaches they reach for repeatedly because they consistently surface issues. These aren’t formal techniques so much as productive habits.

The “What If” Chain

Start with any normal action and then ask “what if” at every step. What if I go back after submitting? What if I open this in two tabs? What if I change my timezone between starting and finishing this workflow? What if I paste in a value instead of typing it? Each “what if” is a micro-experiment. Most will reveal nothing. But the chain often leads to unexpected territory that scripted tests never reach.

The Interruption Pattern

Use the application normally, but interrupt every workflow at unexpected points. Close the browser mid-save. Navigate away during a file upload. Hit the back button after submitting a form but before seeing the confirmation. Switch apps on mobile while a process is running. Real users interrupt workflows constantly — through impatience, accidental taps, network hiccups, or phone calls. Planned testing rarely accounts for all the ways these interruptions can cause trouble.

The Fresh Eyes Walk

Open the application as if you’ve never seen it before. Ignore what you know about how it’s supposed to work and just use it. Where do you get confused? Where does the interface suggest one thing but do another? Where do you have to read instructions to understand what should be obvious? This pattern is less about bugs and more about usability — but it regularly surfaces functional issues too, because confused users do unexpected things, and unexpected things expose unexpected bugs.

The Persona Shift

Stop testing as a tester and start using the application as a specific type of user. A first-time visitor who doesn’t understand the terminology. A power user who does everything with keyboard shortcuts. A distracted parent trying to complete a purchase while managing a toddler. An elderly user who reads every label carefully before clicking anything. Each persona naturally leads you to interact with the application differently, revealing issues that your tester habits might cause you to skip.

The Configuration Sweep

Change the conditions around the application rather than how you use it. Switch languages. Change the system font size. Enable dark mode. Use browser zoom at 150%. Set the date to December 31 at 11:58 PM. Turn on accessibility features like screen readers or high contrast mode. These environmental changes often expose issues that functional testing — which tends to assume a standard configuration — consistently misses.

The Accountability Challenge (And How to Solve It)

Let’s address the elephant in the room. The biggest objection to ad-hoc testing — and it’s a legitimate one — is accountability. How do you know what was tested? How do you justify the time spent? How do you prevent it from becoming a black hole where testing hours disappear without visible results?

These concerns are why SBTM exists in the first place. We spent an entire article on how session-based management solves the accountability problem for exploratory testing. Now we’re advocating for testing that deliberately sidesteps that accountability framework. This seems contradictory, and it would be if ad-hoc testing were meant to replace structured exploration. It isn’t. It supplements it.

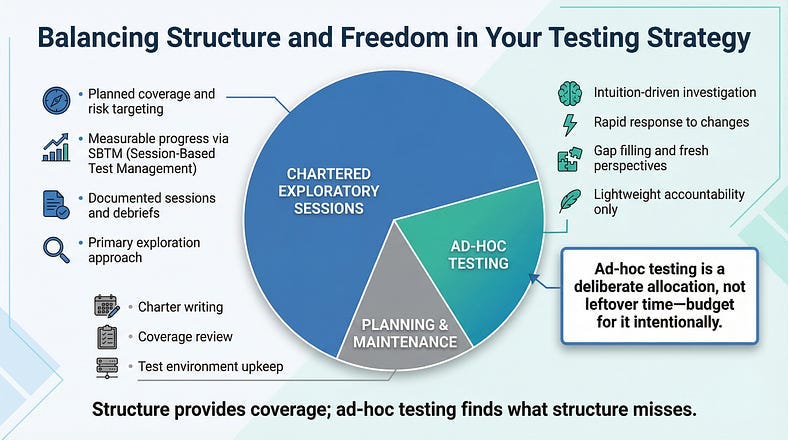

Think of your testing strategy as a budget. Structured, chartered sessions should account for the majority of your exploratory testing time — let’s say 70–80%. That’s where your planned coverage, your risk-based targeting, and your measurable progress live. Ad-hoc testing should be a smaller, deliberate allocation — maybe 15–25% of your time. The remaining fraction goes to test maintenance, planning, and other activities.

The accountability solution for ad-hoc testing isn’t to apply full SBTM overhead to it — that would defeat its purpose. Instead, use lightweight mechanisms:

A shared log. A simple shared document, team channel, or wiki page where testers drop one-line summaries of ad-hoc testing they’ve done. “2/14 — Sarah — 20 min ad-hoc on checkout after payment gateway update. Found currency rounding issue in edge case (BUG-3201). No other issues noted.”

Bug attribution. When ad-hoc testing finds bugs, tag them. Knowing that 30% of your critical bugs were found through ad-hoc testing is a powerful argument for continuing the practice — and for giving testers the freedom to do it.

Retrospective inclusion. In sprint retrospectives or testing reviews, include ad-hoc testing alongside structured sessions. “This sprint we completed 12 chartered sessions and testers spent approximately 8 hours on ad-hoc testing. The chartered sessions found 15 bugs; ad-hoc testing found 6, including 2 critical ones.” This makes the practice visible and its value measurable without burdening individual sessions with documentation overhead.

Ad-Hoc Testing and AI: The Human Advantage

AI-powered testing tools are getting better at executing scripted scenarios, generating test cases from requirements, and even performing some forms of automated exploration. But ad-hoc testing represents something that AI fundamentally cannot replicate: the ability to notice that something feels wrong, to connect an observation in the current session with a conversation you overheard in the hallway last week, to think “a real user would never do it this way” and then test the way a real user actually would.

When AI generates code, the testing assumptions it produces will be internally consistent with that code. As we discussed in our charter writing article, this creates correlated blind spots. Ad-hoc testing driven by human intuition is perhaps the strongest countermeasure against these blind spots, because it’s fundamentally uncorrelated with the AI’s reasoning. The tester isn’t following the AI’s logic — they’re bringing their own entirely independent perspective.

This doesn’t mean ad-hoc testing should ignore AI capabilities. AI tools can actually enhance ad-hoc testing in useful ways: quickly generating test data when you need unusual inputs, summarizing recent code changes to inform your instincts about where to look, or helping you understand unfamiliar system components so your ad-hoc exploration is better informed. The key insight is that AI serves as a tool supporting human-directed ad-hoc testing, not as a replacement for the human judgment that drives it.

In an era where more code is generated by AI and more test cases are written by AI, the unscripted, intuition-driven investigation that a skilled human tester brings becomes not less important, but more. Your ability to test in ways that no algorithm anticipated is an increasingly rare and valuable skill.

Common Mistakes (And How to Avoid Them)

Ad-hoc testing has predictable failure modes. Knowing them helps you steer clear.

Mistake: Using ad-hoc as a default instead of a choice. If every testing session is ad-hoc, you don’t have a strategy — you have a gap in process. Ad-hoc testing is most powerful when it complements structured testing, not when it substitutes for it. If you find yourself never writing charters or session reports, the problem isn’t that ad-hoc testing is your preferred style — it’s that you’re avoiding the discipline of planning.

Mistake: Confusing thoroughness with duration. Spending four hours on ad-hoc testing doesn’t mean you tested four hours’ worth of things. Without the natural rhythm of session time-boxes and debriefs, it’s easy to spiral into deep investigation of minor issues while major areas go unexplored. Check in with yourself regularly: is the thread I’m pulling still worth pulling?

Mistake: Never recording anything. The belief that ad-hoc testing means zero documentation is a misunderstanding. It means minimal documentation — but not none. If you find a bug, report it. If you cover an area and it looks solid, note it somewhere. If you discover something that should inform future chartered sessions, capture that insight. The overhead should be light, but it shouldn’t be nonexistent.

Mistake: Only doing the fun parts. Without a charter directing your attention, you’ll naturally gravitate toward features and scenarios that interest you — which are often the same ones you always test. Good ad-hoc testing deliberately pushes into uncomfortable or overlooked areas. Force yourself to explore the admin settings, the error pages, the edge cases that nobody finds exciting. That’s often where the bugs are hiding.

Mistake: Not knowing when to stop. Ad-hoc testing without any time awareness can expand to fill all available time. Set soft boundaries. If you’ve been exploring for 30 minutes without finding anything notable, that’s useful information — the area might be solid, or your approach might need changing. Either way, don’t keep clicking out of obligation.

Building Ad-Hoc Testing Into Your Process

If ad-hoc testing is valuable, it shouldn’t depend on individual testers happening to do it. It should be a deliberate part of your testing process.

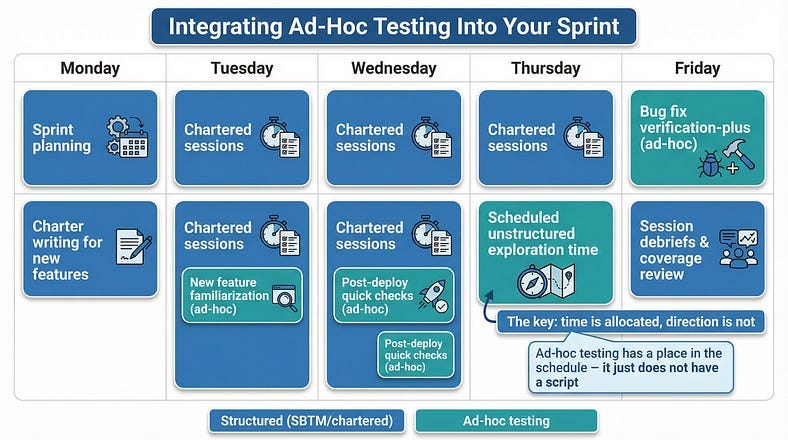

Scheduled Unstructured Time

This sounds contradictory — scheduling something unstructured — but it works. Reserve time each sprint specifically for ad-hoc testing. “Every Thursday afternoon, testers have two hours for ad-hoc exploration.” The time is allocated; how it’s used is up to each tester’s judgment. This legitimizes the practice and ensures it actually happens rather than being perpetually crowded out by planned work.

Post-Change Quick Checks

Build a team habit of brief ad-hoc testing after significant code changes land. Not a full chartered session — just ten or fifteen minutes of a tester poking around the affected area and its neighbors. This catches integration issues and unexpected side effects early, when they’re cheapest to fix.

New Feature Familiarization

Before writing charters for a new feature, have testers spend 20–30 minutes in unstructured exploration. This reconnaissance builds the understanding needed to write effective charters. It’s ad-hoc testing in service of structured testing — the two approaches reinforcing each other.

Bug Fix Verification Plus

When verifying bug fixes, encourage testers to spend a few extra minutes exploring the surrounding area. The fix might be correct, but did it introduce anything else? This “verification plus” habit catches regressions that formal regression testing might miss, because the tester is exploring with fresh context about what changed and why.

Measuring What You Can’t Plan

You can’t measure ad-hoc testing the same way you measure chartered sessions. There’s no TDE/BIR/Setup breakdown, no coverage map, no charter completion percentage. But that doesn’t mean it’s immeasurable.

Track these indicators over time to understand whether your ad-hoc testing is delivering value:

Bugs found per ad-hoc hour. Not to set targets — that would create perverse incentives — but to understand the practice’s productivity. If ad-hoc testing consistently finds zero bugs over multiple sprints, either your application is remarkably stable or your ad-hoc approach needs rethinking.

Bug severity from ad-hoc vs. structured. In many teams, ad-hoc testing finds a disproportionate number of high-severity bugs relative to its time investment. This makes sense — ad-hoc testing gravitates toward unusual scenarios that planned testing doesn’t cover, and unusual scenarios are where critical failures often live.

Areas covered. Even without formal coverage tracking, a shared log of ad-hoc testing activities shows which areas are getting attention and which aren’t. If every tester’s ad-hoc time gravitates toward the same features, you’re getting redundant coverage instead of breadth.

Insights generated. Some of the most valuable output from ad-hoc testing isn’t bugs — it’s observations that inform future testing. “The offline mode seems fragile — we should write charters for a dedicated exploration” is worth more than the ad-hoc session that produced it.

Your Ad-Hoc Testing Quick-Start Guide

Ready to make ad-hoc testing a deliberate, valuable part of your practice? Here’s what you need.

AD-HOC TESTING QUICK-START

============================

BEFORE YOU START

Purpose check: Why am I doing ad-hoc testing right now?

[ ] Responding to a change or hotfix

[ ] Following a hunch or intuition

[ ] Learning an unfamiliar area

[ ] Filling gaps in planned coverage

[ ] Quick verification of a fix

[ ] Scheduled unstructured time

Soft time limit: _____ minutes

(Reassess at this point-continue, stop, or escalate to

a chartered session)

DURING THE SESSION

Stay observant:

- Notice response times, visual inconsistencies,

unexpected behaviors

- Follow threads when observations lead somewhere

- Shift approaches when one angle stops producing

Ask yourself periodically:

- Am I still finding useful information?

- Should this become a chartered session?

- Have I drifted into comfortable territory

instead of pushing into unexplored areas?

AFTER THE SESSION

Capture the essentials:

- Bugs found → file them, tag as ad-hoc

- Areas explored → one-line summary to shared log

- Insights for future testing → note them for

charter planning

- Nothing found → that’s useful data too-note

the area seems stable

SHARED LOG ENTRY FORMAT

Date: _____

Tester: _____

Duration: _____ min

Area(s) explored: _____

Trigger: (hotfix / hunch / gap-fill / scheduled / other)

Findings: _____

Bugs filed: _____

Follow-up needed: Yes / No

Notes: _____The Right to Roam

There’s a concept in land access law called the “right to roam” — the public’s right to walk across open land without following designated paths. It exists because path networks, no matter how well-planned, can never cover every part of the landscape. Some discoveries only happen when you leave the trail.

Ad-hoc testing is the right to roam in your testing strategy. Your chartered sessions are the well-maintained paths — they cover the important routes, they’re documented, and they ensure consistent coverage. But the landscape of your application extends beyond those paths, and some of its most important features (and most dangerous bugs) exist in territory that no path was built to reach.

The testing teams that find the most bugs, build the deepest understanding of their applications, and provide the most reliable quality assessments are the ones that combine the discipline of structured exploration with the freedom of purposeful, skilled ad-hoc investigation. Not one or the other. Both, in deliberate proportion, each making the other more effective.

Don’t let anyone tell you that ad-hoc testing is unprofessional. And don’t let anyone tell you it’s sufficient on its own. Done right — with purpose, with skill, with just enough structure to stay accountable — it’s one of the most powerful tools in a tester’s kit.

In our next article, we’ll explore Monkey Testing Explained — what happens when you push even further past structure into deliberately random, chaotic interaction with your software. We’ll examine how random testing with genuine purpose reveals resilience problems that no amount of human logic would think to test, and where the line falls between productive chaos and wasted effort.

Remember: Ad-hoc testing isn’t the absence of a plan — it’s the presence of a purpose.